AI pioneer Andrew Ng said that he has devised a new type of test to determine the arrival of artificial general intelligence (AGI) in response to growing hype and misuse of the term by several businesses.Stating that AGI currently lacks precise meaning, the co-founder of Coursera said that existing views on the hypothetical level of intelligence can be countered through a new benchmark called the ‘Turing AGI’ test.

Under the proposed test, an AI system and a skilled human professional are allowed to use a computer with internet access and software such as a web browser and Zoom. They will then use the computer to carry out work tasks as part of a ‘multi-day experience’ such as taking calls as a call centre operator.

“A computer passes the Turing-AGI Test if it can carry out the work task as well as a skilled human,” Ng wrote in a post on X. “Most members of the public likely believe a real AGI system will pass this test. Surely, if computers are as intelligent as humans, they should be able to perform work tasks as well as a human one might hire. Thus, the Turing-AGI Test aligns with the popular notion of what AGI means,” he added.

He further argued that “the original Turing Test “which required a computer to fool a human judge, via text chat, into being unable to distinguish it from a human, has been insufficient to indicate human-level intelligence.”

Happy 2026! Will this be the year we finally achieve AGI? I’d like to propose a new version of the Turing Test, which I’ll call the Turing-AGI Test, to see if we’ve achieved this. I’ll explain in a moment why having a new test is important.

The public thinks achieving AGI means…

— Andrew Ng (@AndrewYNg) January 6, 2026

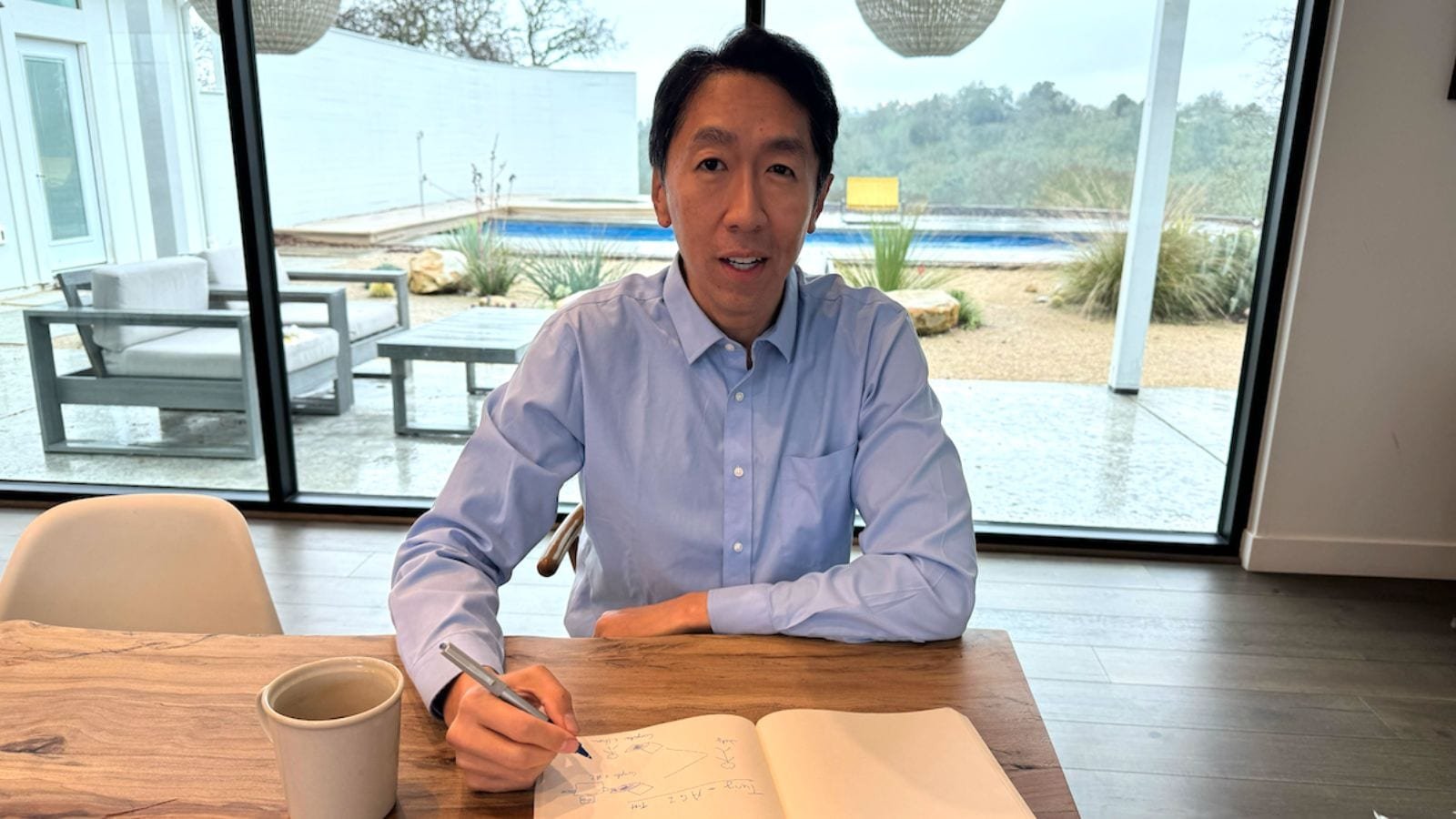

Andrew Ng is one of the most influential voices in AI globally. He has previously headed Google Brain and was the chief AI scientist at Chinese tech giant Baidu. Besides Coursera, Ng has also founded another AI-focused learning platform called DeepLearning.ai and serves as an adjunct professor of computer science at Stanford University.

His remarks come amid a rapidly intensifying debate among top AI researchers and industry leaders about what AGI actually means and whether modern-day large language models (LLMs) are capable of achieving it.

In December last year, famed AI researcher Yann LeCun and Google DeepMind CEO Demis Hassabis publicly disagreed with each other on whether human intelligence is broadly general or highly specialised, with Hassabis saying that LeCun’s view was “plain incorrect” as he was “confusing general intelligence with universal intelligence”.Story continues below this ad

Resharing Hassabis’ post on X, tech billionaire Elon Musk said that the Nobel laureate was right.

The case for a new AGI test

Defining it as an AI system that can do any intellectual task that a human can, Ng said that AGI has turned into a term of hype rather than a term with a precise meaning thanks to businesses looking to set a much lower bar.

He said that the mismatch in definitions can be harmful as it could, for instance, mislead high-school students into avoiding certain fields of study because they think it’s pointless with AGI’s imminent arrival. CEOs could also end up falsely assuming that AI will be more capable in the next two years and change their investment decisions, as per Ng.

Another reason to propose a new AGI test has to do with the shortcomings of pre-determined AI benchmarks such as GPQA, AIME, SWE-bench. “This means AI teams end up at least indirectly tuning their models to the published test sets. Further, any fixed test set measures only one narrow sliver of intelligence,” Ng said.Story continues below this ad

“In contrast, in the Turing Test, judges are free to ask any question to probe the model as they please. This lets a judge test how “general” the knowledge of the computer or human really is. Similarly, in the Turing-AGI Test, the judge can design any experience — which is not revealed in advance to the AI (or human subject) being tested. This is a better way to measure generality of AI than a predetermined test set,” he added.

Emphasising the need to recalibrate society’s expectations on AI, Ng said that defusing the hype around AGI would reduce the chance of a bubble and create a more reliable path to continued investment in AI.

“This will let us keep on driving forward real technological progress and building valuable applications — even ones that fall well short of AGI […] And we can be confident that if a company passes [Turing-AGI] test, they will have created more than just a marketing release — it will be something incredibly valuable,” he said.

Source link